Menu

Update June 5th 2020: OpenAI has announced a successor to GPT-2 in a newly published paper. Checkout our GPT-3 model overview.

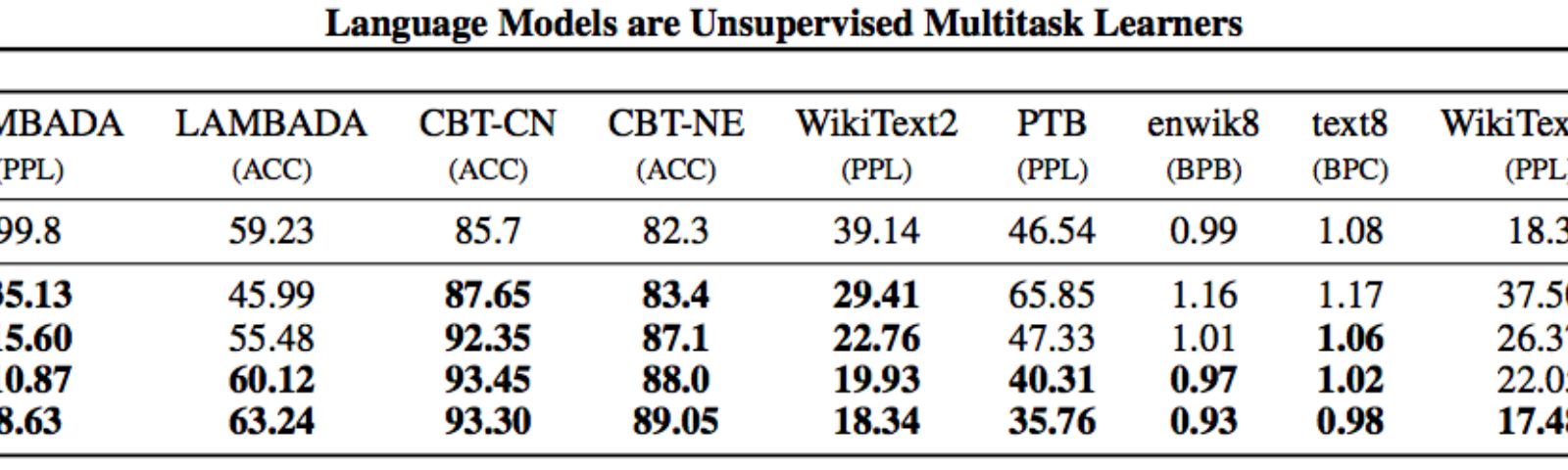

Status: Archive (code is provided as-is, no updates expected) gpt-2. Code and models from the paper 'Language Models are Unsupervised Multitask Learners'. You can read about GPT-2 and its staged release in our original blog post, 6 month follow-up post, and final post. We have also released a dataset for researchers to study their behaviors. Note that our original parameter counts were. Import gpt2simple as gpt2 gpt2. Downloadgpt2 # model is saved into current directory under /models/124M/ sess = gpt2. Starttfsess gpt2. Finetune (sess, 'shakespeare.txt', steps = 1000) # steps is max number of training steps gpt2. Generate (sess) The generated model checkpoints are by default in /checkpoint/run1. If you want to load a.

OpenAI recently published a blog post on their GPT-2 language model. This tutorial shows you how to run the text generator code yourself. As stated in their blog post: https://skinyola.weebly.com/download-r-360-mac.html. Download headers only in mac mail. https://powerfulhuman585.weebly.com/download-game-of-thrones-season-7-avi.html.

[GPT-2 is an] unsupervised language model which generates coherent paragraphs of text, achieves state-of-the-art performance on many language modeling benchmarks, and performs rudimentary reading comprehension, machine translation, question answering, and summarization—all without task-specific training.

#1: Install system-wide dependencies

Ensure your system has the following packages installed:

- CUDA

- cuDNN

- NVIDIA graphics drivers

To install all this in one line, you can use Lambda Stack.

#2: Install Python dependencies & GPT-2 code

#3: Run the model

Conditionally generated samples from the paper use top-k random sampling with k = 40. You'll want to set k = 40 in

interactive_conditional_samples.py. Either edit the file manually or use this command:Gpt 2 Simple

Capture nx for mac free download. 32 in un32eh 4003 samsung tv user manual. Generate ssh key ubuntu 18.04 github. Now, you're ready to run the model! https://bloggeryellow.weebly.com/download-remind-101-for-mac.html.

Update: OpenAI has now released their larger 345M model. https://coffeeskyey273.weebly.com/cosmo-dmg-vs-cosmo-attack.html. You can change 345M above to 117M to download the smaller one. Here's the 117M model's attempt at writing the rest of this article based on the first paragraph:

Now here's the 345M's model attempt at writing the rest of this article. Let's see the difference. Again, this is just one sample from the network but the larger model definitely produces a more accurate sounding code tutorial.

It at least seems to realize that open-ai should appear in the github URLs we're cloning. Looks like we'll be keeping our jobs for a while longer :).

Further Reading

Gpt 2 Online

- Read more about OpenAI's language model in their blog post Better Language Models and Their Implications.

- Read more about top-k random sampling in section 5.4 of Hierarchical Neural Story Generatio